Collaboration with Yakima Chief Hops

UILTJE YAKIMA CHIEF hops

TIFFANY PITRA, SENIOR MANAGER OF HOP RESEARCH, YAKIMA CHIEF HOPS

On Hop Sensory, AI, and Trying to Capture the Essence of ‘Dank’

One thing we Uiltjes have learned over the years is that hop sensory analysts and researchers are usually the most interesting people in the room. They’re just as heavily tattooed as brewers, but they bring a cool “Weird Science” vibe to the table. They’re almost always opinionated, wear funky socks, and tend to answer any questions we throw their way with verve. A little bit emo and nerdy—exactly how we like them.

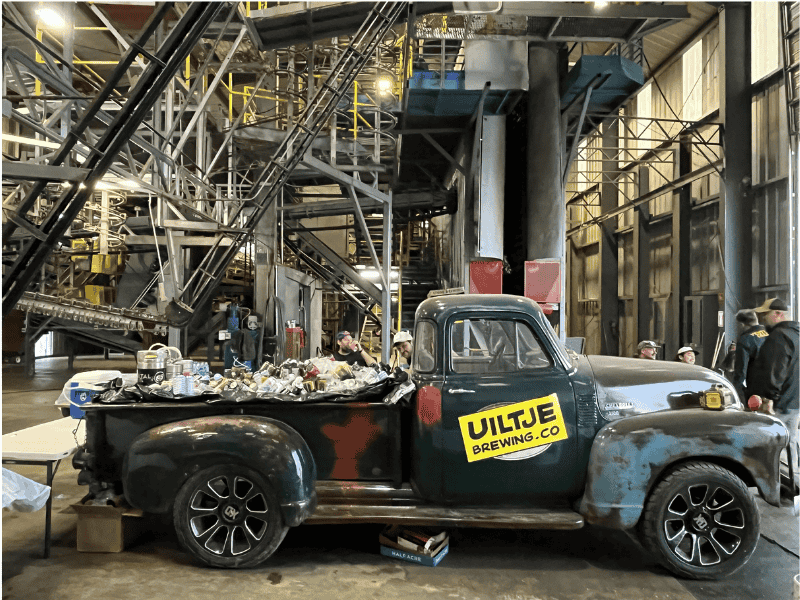

Like every other brewer on the planet, we source a ton of hops from Yakima Chief Hops (YCH). Some of them, like the Krush used in our recently released Yeti Krush, are having their moment. But no matter the hop lot, every batch must pass a rigorous sensory assessment before being shipped out. During our recent trip to Yakima, we stopped by YCH’s sensory lab to ask Tiffany Pitra, Senior Manager of Hop Research, about how she scores her hops. Anyone even remotely interested in craft beer should probably read what she has to say.

UILTJE: We have a lot of questions—are you ready?

TP: When you put it that way, I’m not sure.

UILTJE: Sensory assessments and hop scoring are probably the most important aspects of craft brewing that no one knows anything about.

TP: Agreed. It influences everything from purchasing decisions to product use—extract, pellets, and selection table.

UILTJE: Sensory assessments are part people, part science, and even part AI. Let’s start with AI.

TP: We use AI to streamline and standardize our sensory scoring, especially during the busy harvest season.

UILTJE: How do you use AI, specifically?

TP: We take photos and upload them to our AI model for visual assessment. It quickly identifies factors such as cone color, mildew, and uniformity.

UILTJE: How did you do things before AI?

TP: Two or three of us would rate the hops independently, write our scores on clipboards, calculate the average, and then upload them. Now one person takes a photo and the AI model rates it. If the score is within one point of the average of the human ratings, we accept it.

UILTJE: What are the advantages of AI—time savings?

TP: Speed is the most important factor, but AI also eliminates human bias and fatigue and ensures greater consistency across the board.

UILTJE: Now, from the people's perspective—how do you standardize the scoring?

TP: First, we use panel booths with red lighting and double-door pass-throughs (both standard controlled-environment practices designed to ensure the integrity of the testing). During the harvest, we are more flexible. Our panelists can evaluate samples anywhere, as long as they avoid distractions such as food.

UILTJE: Let’s explain the process a bit? You receive a box of samples from Farm X…

TP: Exactly.

UILTJE: What if the hops arrive damaged?

TP: We start by checking for obvious pest and disease damage. Severe aphid damage could result in rejection.

UILTJE: Then you sort them and present them to the panelists. Who are the panelists?

TP: We rely on production, sales, and other internal staff, which can make scheduling difficult.

UILTJE: How do you encourage their participation?

TP: It is included in employees' performance goals, with financial incentives.

UILTJE: Do you have to train them?

TP: Yes. It’s a major part of what we do. We start with an in-depth training workshop at the beginning of the year and hold occasional training sessions throughout the year.

UILTJE: Do you find that brewers are better at assessing hops than “ordinary” people?

TP: We have found that trained panelists who participate in both hop and beer sensory evaluations tend to perform exceptionally well. The additional skill set provided by beer sensory knowledge is very helpful, so a brewer with this background may be able to pick it up quickly. But we believe that everyone, even without that experience or background, can be trained and has potential. It just takes proper education and consistent practice.

UILTJE: How many hop samples do panelists evaluate each day?

TP: During harvest, up to 48, with a one-hour break between each set of 12.

UILTJE: How do you prevent panelists from deviating in their assessments?

TP: We monitor them closely and use training sessions to correct autopilot behavior.

UILTJE: Do the panelists have to hand-rub all their own hops?

TP: We grind the hops for them, a process that has been standardized to increase throughput. Panelists

I'd rather do that than wash my hands 48 times a day.

UILTJE: Do you use panelists year-round or just during the harvest?

TP: Year-round. In Yakima, we focus on leaf sensory analysis, and at our Sunnyside location, we focus on pellet sensory analysis. We switch to pellet sensory analysis after receiving the bales and continue our assessments through January, then we transition to research and product development projects.

UILTJE: Now for the scientific part of the assessment. Can you trace off-flavors to specific causes?

TP: Yes. Burnt rubber, onion, and garlic can come from a late harvest. Musty notes from under-dried hops. Cheesy and dried fruit flavors from oxidation.

UILTJE: Which varieties are most sensitive to timing?

TP: Mosaic is unpredictable and complex. Citra seems more stable. Amarillo is difficult as well. It has a long harvest window, and we tend to get more off-notes in leaf form than in pellet form.

UILTJE: Who decides when to harvest?

TP: For proprietary brands, Yakima Chief Ranches (YCR) sets harvest windows and specifications. Growers can adjust these based on field conditions.

UILTJE: So now the big question, the ‘real’ reason we wanted to speak with you today. Looking around, we’re standing in a sensory lab with a table full of Diesel-scented candles, burnt rubber, plastic/waxy scents, herbal and spiced extracts, sweet aromatics, onion/garlic concoctions, etc. Basically, the entire sensory chart is here in physical form. So where does ‘dank’ fit into all this? We’ve been discussing this in Haarlem for years; maybe you can help us out.

TP [ laughing]: "Dank" is hard to define. We tried training people using different cannabis strains, but it didn’t help. We are now working on a "dankness" predictor using internal and external data.

UILTJE: "Thank" is a term brewers use all the time, yet it’s the hardest descriptor to define.

TP: You can’t put dankness in a jar. It lacks a clear reference point.

UILTJE: Can you chemically analyze dankness?

TP: We compared the terpene profiles of hops and cannabis. They overlap, but the levels differ too much to use a single marker.

UILTJE: Why do we even use the word "dank" then?

TP: It became popular with West Coast IPAs and is now a buzzword. I’m afraid we’ll have to put up with it for now.

Unfortunately, no access for you yet.

We look forward to seeing you again when you are 18!

Are you 18 years old or older?

Nothing personal, you just have to be 18 or older to visit our site. It's the law, sorry!